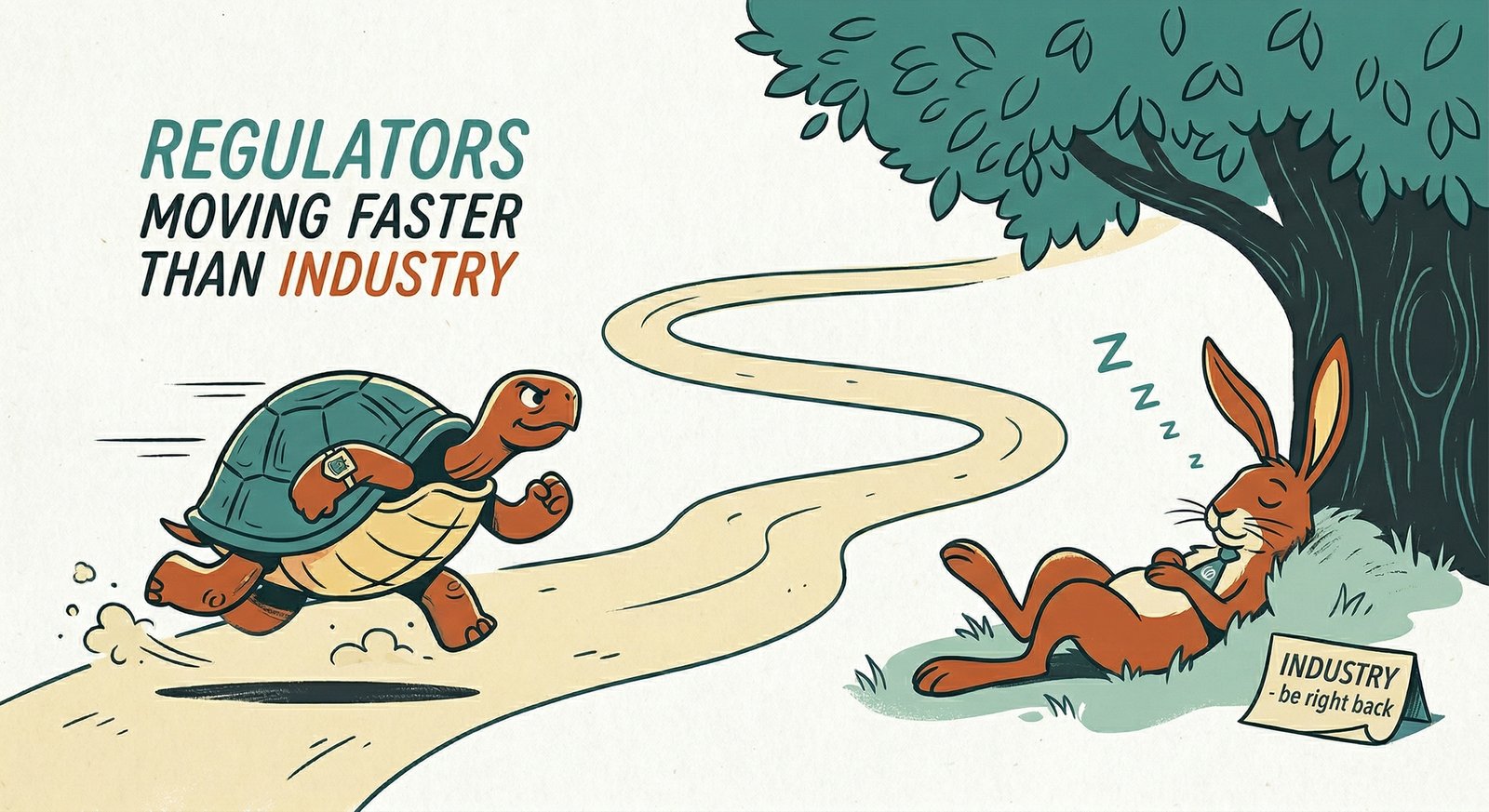

Regulators Are Moving Faster Than Industry

Fabrizio Maniglio

October 1, 2025

For the first time, regulations are being put out for things that don’t exist yet.

That sentence should stop you. Historically, the pattern has always been the same: industry innovates, regulators catch up. Seatbelts existed before seatbelt laws. Social media existed before data privacy regulation. Cloud computing existed before GxP cloud guidance.

With AI in life sciences, it’s reversed. Annex 22 regulates AI applications that aren’t deployed yet in pharma. This is unprecedented.

How We Got Here

There was genuine hesitation and demand for guidance. Companies across the industry were asking “what does the FDA say?” before making a move. Regulators tried to fill the gap. That’s understandable and, in many ways, responsible.

But AI moves too fast. By the time Annex 22 reached public consultation, the technology had moved so far ahead that the regulation couldn’t account for what was already relevant. The gap between what the guidance covers and what the technology can do was already wide at publication. It’s only getting wider.

The Perverse Outcome

Annex 22 excludes probabilistic models from GxP-critical applications. This covers the vast majority of modern AI, including large language models. The regulation takes an already conservative industry and gives it regulatory cover to stay conservative.

The “holding pattern” becomes self-reinforcing: companies wait for regulatory clarity before acting, regulators write guidance based on what industry has (or hasn’t) deployed, and the cycle continues. Meanwhile, the technology keeps advancing and the gap keeps growing.

What Should Have Been Different

The approach should have been risk-based, not categorical. Annex 11 already has good language around risk. The framework should say: based on the risk of your application, the novelty of the AI, and the burden of proof, here’s what’s acceptable with what guardrails.

Not: exclude an entire category of technology.

The comparison-to-status-quo test is the only question that should matter. Is it better than what we’re doing now? Not “is it perfect?” Not “is it fully transparent?” Because humans aren’t fully transparent either. We just pretend they are because we’ve been doing it that way for decades.

Why This Moment Matters

This is a unique historical moment worth documenting. The gap between AI capability and regulatory permission is widening, not narrowing. Companies are using regulatory uncertainty as an excuse not to act. And the regulatory posture, well-intentioned as it is, risks locking the industry into a level of AI adoption that doesn’t match what the technology can deliver.

This isn’t an argument against regulation. It can’t be the Wild West. But blanket exclusion isn’t the answer either. Risk-based thinking, proportionate requirements, and evidence-based adoption are the path forward.

The regulators moved first. Now industry needs to engage, not wait for the next draft.

Fabrizio Maniglio

Keynote speaker & thought leader helping life sciences organizations navigate AI, quality, and the humans caught between the two.